AI integration in fintech means connecting AI models to existing financial systems so they operate in production. This happens all the time in things like payment processors, core banking infrastructure, KYC providers, and data pipelines.

The four AI integration patterns in fintech are API-based wrappers, embedded model deployment, data pipeline augmentation, and agentic orchestration.

Having skilled fintech talent on your team lets you make better decisions on which one to use in your specific scenario, as each requires different infrastructure, compliance controls, and team capabilities.

Most fintech AI projects fail because the integration is wrong, so let’s look at what fintech AI integration actually requires.

If you need the talent to make your fintech software development a success, view capabilities.

Key Takeaways

- Gaps between pilot and live deployment stem primarily from integration and team problems, not model quality.

- Different AI integration patterns require fundamentally different infrastructure, compliance controls, and team skills.

- Many AI implementation failures trace back to data unreadiness.

- High-risk AI use cases in fintech (credit scoring, AML screening) require a compliance build time of 8–12 weeks minimum.

- Regulatory frameworks, including SR 11-7, the EU AI Act, ECOA, and DORA, need to be identified as early as possible.

What AI Integration Actually Means in Fintech

The term “AI integration” tends to get used loosely.

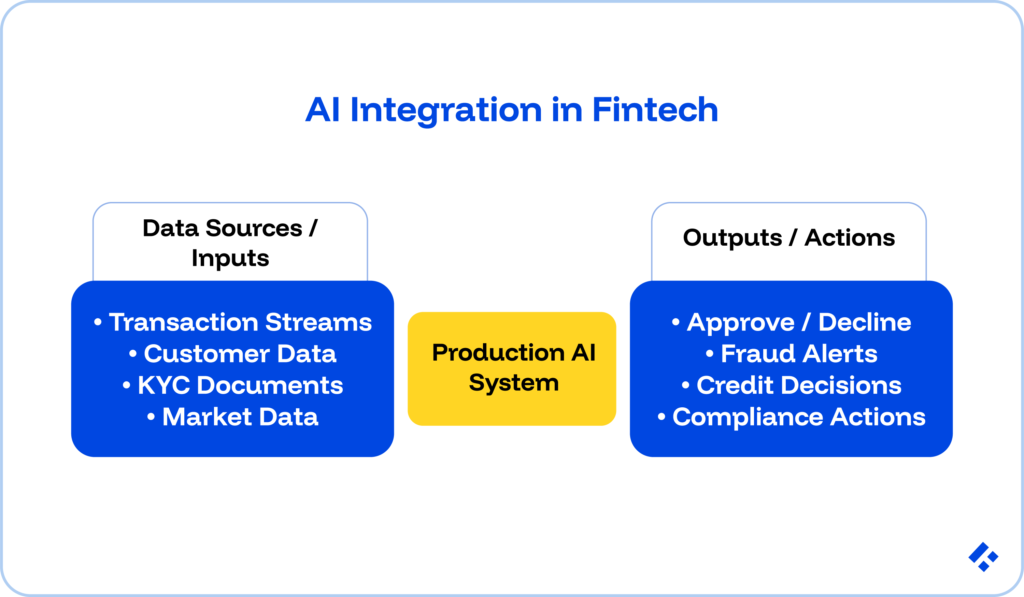

In the cases we are speaking about here, it describes connecting an AI model or system to production fintech infrastructure so that the AI’s outputs directly influence real financial decisions or customer interactions.

From what we have seen, there are three requirements that separate genuine AI integration from AI experiments.

First, you are working with production data rather than clean samples. Think of things like transaction streams, customer records, market feeds, and KYC documents. In real-world cases, these tend to be less standard and more compliance-sensitive than training data.

Second, you need to ensure real-time or near-real-time output. In the case of fraud detection, this is milliseconds. Credit decisioning is also reduced to several seconds.

Batch-only systems, which a lot of larger institutions still use, function more like AI reporting than AI integration.

Finally, there are serious downstream consequences. The AI output usually changes something. It approves or declines a transaction, triggers a compliance alert, or adjusts a credit limit.

If nothing in the product changes because of the AI output, the integration has not actually occurred.

You need to take all of these factors into account before you start to fully understand the engineering scope that you are getting into, and prevent issues that could have otherwise been avoided.

Related Reading: AI in Fintech Benefits and Use Cases

Readiness Assessment

36% of AI implementation failures trace back to data unreadiness.

Conducting a readiness assessment can help you determine whether your integration reaches production or stalls three months in, and will potentially save you a significant part of your engineering budget.

Data readiness

Financial data often creates structural problems for AI integration.

Inconsistent API schemas across banking providers, missing fields in transaction records, mixed formats in legacy systems, and PII create compliance exposure if they enter model inference pipelines.

You need to think about whether or not you have a unified data layer that the AI system can query reliably. Are data schemas documented and versioned?

Infrastructure readiness

Most fintech stacks were not built for model inference at scale.

Real-time fraud detection requires low-latency inference endpoints. LLM-based features require GPU infrastructure or managed inference APIs.

Consider whether your current cloud setup can handle model inference alongside transactional workloads without SLA degradation.

Compliance readiness

High-risk AI applications in fintech, like credit decisioning, AML/KYC screening, and insurance underwriting, all require explainability, bias testing, audit logging, and model documentation.

This needs to happen before regulators accept their use in production.

Building without these controls and retrofitting them later is incredibly difficult and often leads to big mistakes.

In our experience, this ends up costing significantly more than designing them from the start.

Team readiness

AI integration requires engineering skills that most fintech teams do not have at sufficient depth.

We would strongly recommend that you consider a specialist ML engineer for model deployment and monitoring, data engineers for feature pipeline construction, and integration engineers for connecting AI outputs to existing systems.

Hiring these niche positions might cost more money upfront, but it will end up proving to be the more cost-effective option in the long run.

The Four AI Integration Patterns in Fintech

The integration pattern you choose will determine the total engineering complexity you need, the latency profile, the compliance surface, the different team skills and roles required, as well as how you should move forward.

Pattern 1: API-Based AI Integration (Wrapper Approach)

Your fintech product calls an external AI model via API. The most commonly used examples out there are OpenAI, Anthropic Claude, AWS Bedrock, and Google Vertex AI.

After that, the model’s response feeds directly into your product logic.

In short, your infrastructure does not host the model.

When this works well: LLM-based features like customer support agents, document summarization, and internal knowledge retrieval. Rapid prototyping. Use cases where the response latency of 1–5 seconds remains acceptable. Teams without ML infrastructure in-house.

Fintech-specific constraints: PII and financial data cannot be accessible in any way, even in prompts sent to external APIs, without explicit data processing agreements and data residency evaluation under GDPR and applicable banking regulations.

Engineering requirements: API integration engineers, prompt engineers, output validation framework, PII scrubbing layer before API calls, and fallback logic for API unavailability.

Pattern 2: Embedded Model Deployment

In this case, the AI model runs in your own infrastructure. It’s on-premises, in your cloud VPC, or in a managed deployment on AWS SageMaker, Google Vertex, or Azure ML.

This is definitely more secure as data does not leave your environment.

When this works well: High-sensitivity use cases where financial data cannot leave your infrastructure (credit scoring, AML screening, fraud detection on transaction data). Low-latency requirements at sub-100ms. Regulatory environments requiring data residency.

Fintech-specific constraints: The infrastructure you need will probably cost substantially more than API calls at low volume, so you need the scale to justify the investment. Embedded deployment also requires MLOps infrastructure like model versioning, A/B testing, performance monitoring, drift detection, and retraining pipelines.

Engineering requirements: ML engineers with model deployment and MLOps experience, DevOps/cloud engineers for inference infrastructure, data scientists for model validation.

Pattern 3: Data Pipeline Augmentation

Here, AI and ML models sit inside your data processing pipelines rather than your product’s request-response flow. These need to be able to handle data transformation, enrichment, and feature engineering that feeds into all of your other systems.

When this works well: Risk scoring and credit model outputs consumed by underwriting systems. Transaction enrichment, such as merchant categorization and spend pattern labeling. Batch-mode fraud scoring for review queues. Feature engineering for downstream ML models.

Fintech-specific constraints: Audit trail requirements mean pipeline outputs must be logged with model version, input features, and timestamp. Data lineage documentation is increasingly required by regulators for AI-generated features used in credit or risk decisions.

Engineering requirements: Data engineers with streaming pipeline experience, ML engineers for feature engineering, and data architects for lineage and audit logging design.

Pattern 4: Agentic AI Orchestration

AI is at a point now where agents can execute multi-step workflows autonomously. In the FinTech context, this could include retrieving data from multiple systems, making decisions, taking actions, and handing off to human review at defined escalation points.

The AI orchestrates an entire process rather than returning a single response.

When this works well: Complex compliance workflows including AML case investigation, KYC document review, and SAR generation. Credit underwriting pipelines involving document retrieval, bureau queries, and adverse action notice drafting. Customer service flows require account data access across multiple systems.

Fintech-specific constraints: Agentic AI systems in regulated contexts require explicit audit logging at every decision point. Human-in-the-loop escalation must exist.

Engineering requirements: Agentic AI engineers with LLM orchestration framework experience (LangGraph, LlamaIndex Workflows), integration engineers for multi-system data access, security engineers for access control design.

Build vs. Buy: A Decision Framework for Fintech AI

Every fintech AI integration that we have ever worked on reaches a build-vs-buy decision on the model layer.

In most cases, the integration infrastructure gets built. The choice comes in with whether the underlying model comes from a vendor, gets fine-tuned from an open-source base, or gets built from scratch.

Buy a vendor model when:

- The use case is standard and not proprietary (customer support chatbots, document OCR).

- Your data volume is insufficient for meaningful fine-tuning

- Speed-to-production is critical.

- Your budget falls under roughly $200,000.

- The use case does not require data residency.

A good example of this is how JPMorgan’s LLM Suite uses OpenAI and Anthropic models for internal knowledge retrieval.

Build or fine-tune when:

- Proprietary data is critical.

- Data residency requirements prevent sending financial data externally.

- The model must deliver decision-level explainability under ECOA or SR 11-7.

- Your latency requirements fall below 100ms.

Most fintech AI integrations that we have worked on end up hybrid. In other words, they buy for the majority of use cases, build or fine-tune for the one or two where proprietary data or regulatory requirements justify the cost.

Related Reading: Fintech Vendor Risk Management

Four-Phase Implementation Roadmap

The most common failures come from teams without the right resources attempting to go from zero to production AI in a single project cycle. Here is a better way forward.

Phase 1: Use Case Selection and Readiness (Weeks 1–3)

Figure out one prioritized use case. Prepare for that specific instance with a readiness assessment, and select your integration pattern.

A good first use case has a clear measurable success metric, sufficient accessible training data, a regulatory risk level that matches current team capability, and a business stakeholder committed to adopting the output.

Be very careful not to begin development until the use case, integration pattern, data availability, and regulatory risk classification are documented and signed off.

Phase 2: Prototype and Data Validation (Weeks 3–6)

Here, you build a working prototype connected to sample production data and initial model performance benchmarks.

Once the prototype is ready, you should start testing it against real data to help you surface data quality issues before they become production failures.

Your prototype must hit minimum performance thresholds on real data before proceeding.

Related Reading: AI Integration and Data Bias: Responsible AI in Fintech

Phase 3: Production Integration and Compliance Build (Weeks 6–14)

The next phase is to get this model deployed in production with full compliance controls, monitoring, audit logging, and human-in-the-loop escalation

A lot of the people we work with underscore this.

Production AI integration covers model deployment, along with other things like real-time feature pipelines, inference serving, latency monitoring, output validation, audit logging, explainability layer, bias testing documentation, drift detection, and incident response plan.

It’s good to allow 8–12 weeks minimum for high-risk use cases (credit scoring, AML screening) and 4–6 weeks for lower-risk ones.

Under no circumstances should you go live until compliance controls, audit logging, and human escalation are fully operational.

Phase 4: Monitoring, Retraining, and Scaling (Ongoing)

Live models with defined retraining cadence, performance monitoring dashboards, and an expansion roadmap should be your final goal.

To get there, you need to define retraining triggers like drift detection thresholds and performance metric drops before go-live.

Also, define the team responsible for ongoing monitoring to prevent production risk the first time the model encounters data it was not trained on.

Related Reading: Scaling Fintech Infrastructure for Hyper-Growth

Model Risk and Regulatory Governance: What Actually Applies

AI models that influence financial decisions in regulated contexts are subject to certain risk management obligations. Here are some regulatory frameworks that apply specifically to regulated fintech deployments for you to consider.

- SR 11-7: Increasingly applied to AI and ML models used in credit underwriting, pricing, and fraud detection. Requires model documentation, independent validation, ongoing performance monitoring, and clear escalation procedures when performance degrades.

- EU AI Act: Credit scoring, AML screening, and insurance risk assessment qualify as high-risk AI and require conformity assessments, human oversight mechanisms, transparency obligations, and registration in the EU AI database before deployment.

- ECOA and FCRA: Any AI model influencing credit decisions must explain why a credit application was declined. Black-box models that cannot produce this explanation are not legally deployable for US consumer credit decisions.

- DORA: Third-party risk management requirements apply to external AI API providers.

The Engineering Team Structure for AI Integration

As you might have already realized, AI integration in fintech requires a cross-functional team that most fintech engineering organizations do not have at full strength. This includes:

- ML Engineers (1–3): Model deployment, MLOps infrastructure, inference serving, performance monitoring, and retraining pipeline management.

- Data Engineers (1–2): Real-time feature pipeline construction, data quality validation, lineage tracking, and audit log infrastructure.

- Integration Engineers (1–2): Connecting AI model outputs to existing fintech systems.

- Compliance/Explainability Engineer (1 or shared): Designs and implements audit logging, model explainability output, and bias monitoring systems required for regulated AI deployments.

If you have a growth-stage fintech, you probably only have one or two of these roles in-house at the required seniority level.

The remaining three to five are either absent or filled by engineers who need 3–4 months of ramp time to reach productivity in a fintech AI context. That ramp period quietly consumes project budget and delays timelines before anyone formally names the problem.

Final Thought

Hiring the right people, with the right experience and knowledge of the fintech regulatory environment, is absolutely essential, especially when you are integrating AI models into workflows that deal with sensitive information.

Hiring these engineers can take months. And getting them onboarded to a point where they can start contributing effectively takes even longer.

At Trio, we help you prevent those delays, so you can focus on development. We have pre-vetted developers on hand, who have the industry experience required to start being productive in 3-5 days.

To connect with cost-effective FinTech specialists, request a consult.

Frequently Asked Questions

What is AI integration in fintech?

AI integration in fintech means connecting AI models to production financial systems so their outputs directly influence real financial decisions.

What are the four AI integration patterns used in fintech?

The four patterns for integrating AI into fintech are API-based wrappers, embedded model deployment, data pipeline augmentation, and agentic orchestration.

What regulatory frameworks apply to AI integration in fintech?

US-based fintechs face regulatory frameworks like SR 11-7 for model risk management and ECOA adverse action notice requirements for credit AI. EU-operating fintechs fall under the EU AI Act’s high-risk classifications and DORA for third-party AI provider risk. All regulated fintechs also face PCI DSS and privacy laws, including GDPR and CCPA.

How long does AI integration take in a fintech product?

AI integration for lower-risk use cases in fintech, like document summarization or internal chatbots, typically takes 6–10 weeks from assessment to production. Higher-risk regulated use cases, including credit scoring and AML screening, require as much as 12 months.

What makes integrating AI into fintech different from other industries?

Fintech AI integration is different from other industries because it carries a heavier compliance burden.