Building financial technology that actually holds up under pressure has quietly become one of the hardest jobs in engineering. To build resilient fintech solutions, even the smallest failure matters a lot when money and personal information are involved.

Growth alone makes the stakes obvious, with the fintech market expected to reach $652.80 billion by 2030.

If you want to get ahead, you need to build trust. Refining how your product handles massive loads, your failure responses, and more allows you to satisfy both regulators and users.

Failing to produce resilient fintech solutions not only means fines and restricted service permissions, but also a resulting loss of clientele.

At Trio, we spend most of our time with FinTech engineering leaders, planning, designing, and building resilience into any fintech product from banking platforms to monitoring tools. View our capabilities.

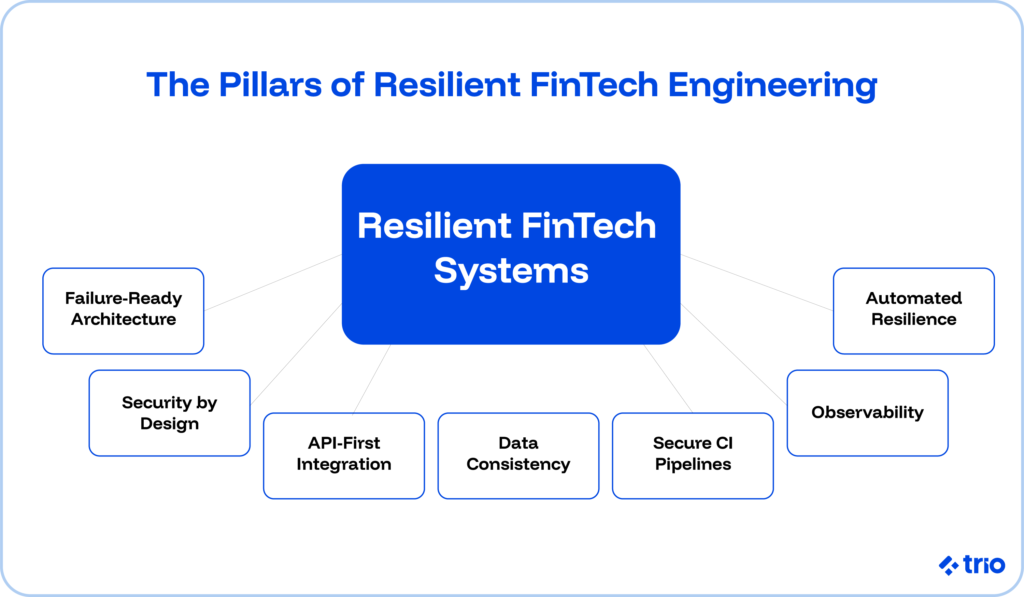

The 7 Core Principles of Resilient FinTech Engineering

There are seven core principles that our engineers implement to ensure resilience.

These principles surface in architecture reviews, sprint planning, deployment checklists, and incident postmortems.

If you can apply them consistently, complexity becomes manageable. Treat them as optional, and no process framework will save you.

1. Design for Failure: Chaos Engineering

By far the best way you can protect customer experience is by assuming components will fail and designing recovery paths in advance.

Instead of trying to eliminate failure entirely, resilient teams treat failure as inevitable and plan how the system should respond when it happens.

Fault tolerance and graceful degradation sit at the center of this mindset.

When one service goes down, the rest of the platform should continue operating in a reduced but controlled mode.

A payment confirmation delay might frustrate a user. A complete transaction blackout damages trust far more.

If you are serious about a good user experience, then you generally need to aim for at least active resilience. That means failover mechanisms are tested regularly, continuously synchronized databases, and early warning indicators are tracked before they escalate.

These should be included even in your most basic MVP.

More mature teams go even further by running in production failover tests to validate that redundancy actually works. They also simulate dependency outages and test things like capacity surges, almost like those you would see during peak events.

Doing this means you find gaps and deal with them before they become real-time issues.

Fittingly, this is referred to as chaos engineering.

2. Security-First Architecture: Zero Trust and Least Privilege

You protect customer trust from day one by designing security into the foundation, not bolting it on after launch.

In practice, that means evaluating every decision, data storage, service communication, and access controls through a security lens before code ever reaches production.

Defense in depth anchors that mindset.

Just like when you design for failure, you don’t assume that any single control will hold, and you create layers so that you are protected when your security measures give out.

Serious FinTech teams increasingly express this through zero-trust architecture.

Every request requires authentication and authorization, regardless of where it originates. Internal traffic receives no free pass.

Threat modeling during design, not as a last-minute compliance exercise, can also be incredibly useful as it forces engineers to map realistic attack paths tied to the actual financial use case.

A lending platform faces different risks than a cross-border payments engine. Once those vectors appear on paper, countermeasures at the data, application, and infrastructure layers become concrete decisions rather than vague intentions.

Immutable audit logs and real-time anomaly detection deserve “non-negotiable” status.

We often see regulators request evidence.

Incident response teams also rely on those logs when something behaves unexpectedly.

Zero trust connects tightly to least privilege.

Each user, service, and component receives only the permissions required to perform its specific role.

For FinTech teams, that translates into strict separation between development, staging, and production environments, credential vaulting instead of shared secrets, and documented access workflows.

When lateral movement feels difficult by design, breaches tend to stay contained instead of spreading.

3. API-First Design: Integration Documentation and Governance

Juniper Research predicted that API calls in financial services would jump to $580 billion by 2027. Teams that underestimate that trajectory often struggle to integrate at scale later.

Designing for integration means assuming from day one that other systems will consume your services.

Our fintech developers have helped countless companies work retroactively to create clear documentation, predictable versioning strategies, consistent naming conventions, and meaningful error responses, all of which reduce friction for partners and internal teams alike.

A vague 500 error might satisfy a developer in a hurry, but it slows down everyone else and takes longer to fix later than it does to just take care of in the moment.

Governance deserves early attention.

When multiple teams independently design endpoints, inconsistencies accumulate quickly.

Establishing standards up front prevents expensive rewrites down the road.

Rate limiting and monitoring should also appear in the first production release. Market volatility, payment surges, and reporting deadlines create traffic patterns that spike without warning. Resilient systems expect that unpredictability.

Interoperability reinforces resilience. Loosely coupled services that rely on well-defined, standard schemas can absorb upstream changes with less drama.

Tightly coupled integrations, by contrast, turn every external update into a potential outage.

Related Reading: API-First Banking and Automating Processes

4. Data Consistency: The Saga Pattern and Audit Trails

You protect revenue and regulatory standing by prioritizing correctness over speed when money moves.

In most software domains, teams can trade strict consistency for availability or performance. Financial systems rarely enjoy that luxury. You need to get things right, or you’ll lose clients.

Eventual consistency works for social feeds. It rarely works for transaction ledgers.

Immediate consistency, supported by careful database design and disciplined transaction management, reduces the risk of discrepancies that trigger audits or erode customer confidence.

Distributed architectures complicate the picture.

The Saga pattern offers a practical way to manage transactions that span multiple services.

Instead of relying on brittle distributed transactions, you break a long-running operation into smaller local steps.

Each step includes a compensating action that can reverse its effect if a downstream process fails. That structure preserves consistency without the fragility of two-phase commit.

Detailed audit trails for every state change should be considered standard procedure.

Real-time reconciliation processes and automated consistency checks should also be conducted consistently, as they help surface issues early.

Ultimately, if you need to make a choice between speed and correctness, FinTech teams that consistently choose correctness tend to last.

You can always deal with latency issues as part of tech debt. It might not create the best UX, but at least you’ll maintain your reputation and regulatory approval.

5. Secure Coding Standards: CI Pipelines and Software Minimalism

You reduce security risk by embedding secure coding standards directly into everyday workflows. If you try to create and integrate them later, not only is it more difficult, but you also run the risk of missing something or creating new issues.

In FinTech, a last-minute security review often misses systemic issues that crept in earlier, even if you have hired security experts.

Static code analysis integrated into the CI pipeline catches common vulnerability classes before code reaches staging or production.

Established frameworks, such as OWASP or CERT guidelines, provide a shared baseline across teams.

Consistency matters. Contractors, nearshore engineers, and full-time hires should follow the same standards, evaluated with the same rigor applied to performance or correctness.

That’s one of the many reasons companies love our fintech specialists who understand these intricacies.

Software minimalism strengthens this approach.

Smaller codebases generally mean smaller attack surfaces. Restricting open ports, limiting exposed interfaces, and removing unused dependencies all reduce the number of entry points an attacker can exploit.

Over time, fast-moving teams accumulate libraries, feature flags, and half-retired modules. Without active pruning, complexity grows quietly.

Minimalism requires discipline.

Engineers often feel pressure to move quickly, and removing code rarely receives the same recognition as adding features. Yet in regulated environments, accumulated complexity increases risk and audit burden.

6. Observability: SLI and SLO Frameworks

One of the best ways we have encountered to reduce customer-facing incidents is by making system behavior visible before problems escalate.

Basic uptime monitoring tells you whether a service responds. Observability explains why it behaves the way it does.

Full-stack visibility spans data pipelines, application layers, infrastructure, and third-party dependencies.

Structured logging with correlation IDs lets engineers trace a single request across services without guesswork. SLI and SLO frameworks tie technical metrics to business outcomes, so leadership can see how reliability affects revenue and trust.

The real shift happens when teams move from reactive to proactive observability.

At lower maturity levels, customers report issues first.

At higher levels, systems surface early warning signals, subtle increases in anomaly rates, unusual network patterns, and repeated container restarts. We will discuss identifying your own maturity level further below.

Compliance adds another layer.

Access logs, transaction histories, and audit artifacts must remain accessible for review. But, as we mentioned already, it is cheaper to do this right from the beginning than to leave it until regulatory bodies require the information.

7. Automate Resilience Into the Delivery Pipeline

Manual reviews and ad hoc checks simply cannot keep pace with modern FinTech release cycles. Instead, automating resilience allows you to adapt quickly and gives you the ability to scale.

CI/CD pipelines should embed security scanning, integration testing, and performance validation as default steps.

Static analysis can block merges when secure coding standards are violated, while automated vulnerability scanning across dependencies helps teams detect newly disclosed risks without waiting for the next release window.

Infrastructure as code reduces configuration drift between environments.

When staging and production differ in subtle ways, incidents become harder to diagnose.

Declarative infrastructure definitions become incredibly important in an effort to create consistency and auditability at the environment level, not just within application code.

Monitoring and alerting also benefit from automation.

Anomaly detection systems can identify unusual patterns in transaction volumes or system behavior earlier than threshold-based alerts alone. Automated failover testing scripts, run on a scheduled basis, confirm that redundancy mechanisms still function as intended.

Automation also mitigates human error.

Engineers juggle context switches, tight deadlines, and operational interruptions. Fatigue leads to misconfigured permissions or overlooked edge cases. Even a simple typo can cause major issues and can be difficult to correct.

By automating the highest stakes parts of the pipeline, teams reduce the likelihood that routine mistakes escalate into regulatory incidents.

Understanding Your Resilience Maturity Level

Not every FinTech company operates at the same maturity level. It is important not to see maturity level as an indication of success. Instead, what matters is clarity about where you stand and how quickly you need to evolve.

Foundational Resilience Maturity

At the foundational level, resilience often depends on manual processes. Individual system owners manage backups. Outages surface through customer complaints. Recovery steps vary depending on who happens to be on call.

Early-stage startups sometimes operate this way out of necessity. Once transaction volume grows or regulated data enters the picture, the risk profile changes dramatically.

Sustainable Resilience Maturity

Active resilience marks a more sustainable stage.

Application-level monitoring, scheduled failover tests, continuous database synchronization, and proactive tracking of early signals form the baseline.

Teams rehearse recovery before production incidents force them to improvise.

High Resilience Maturity

Inherent resilience represents a higher bar. Architecture itself absorbs shocks. Anomaly detection operates at the data layer. Redundancy activates automatically without manual intervention.

Achieving this level requires coordination across engineering, security, and operations, supported by leadership that values long-term stability as much as feature velocity.

Increasing Resilience Maturity Progression

Three elements tend to accelerate progress.

- A blame-free culture encourages engineers to surface vulnerabilities early rather than hide them.

- A metric-driven approach measures incident frequency, repeat failures, and recovery time objectives with discipline.

- Regular scenario rehearsals simulate outages before customers ever notice them.

When these elements align, resilience shifts from aspiration to practice.

Prioritizing Resilience Across Your Technology Stack

Resource allocation can be done more effectively by focusing resilience investment where failure would hurt most. Not every service requires identical safeguards.

Start by mapping mission-critical systems. Transaction processing engines, identity verification layers, and payment routing components typically sit at the center. Supporting tools may tolerate higher recovery times.

Understanding interdependencies clarifies which failures cascade and which remain isolated.

Once critical services are identified, assess their current resilience posture.

Where do single points of failure exist? Which components lack redundancy or monitoring? A structured review often reveals uneven investment, with some systems over-engineered and others under-protected.

Third-party dependencies deserve particular scrutiny. Cloud providers, payment processors, KYC services, data feeds, and banking APIs all introduce external risk.

Contracts should specify resilience expectations.

Contingency plans should outline fallback procedures if a critical provider experiences downtime. Over time, external outages will occur. Preparing for them reduces chaos when they do. We always recommend that our clients have at least one alternative vendor ready.

How Engineering Teams Can Improve Resilience

The best way to strengthen system resilience is by hiring engineers who understand what regulated software demands are, like those at Trio.

Architecture diagrams and security policies do not implement themselves. The people writing code, reviewing pull requests, and responding to incidents ultimately determine whether principles survive real-world pressure.

Many FinTech companies rely on engineers who can move quickly without cutting corners. That balance sounds simple in theory. In practice, it narrows the talent pool.

Security by design, strict environment separation, distributed transaction management, API governance, and audit readiness- these skills rarely develop in purely consumer product environments.

An engineer who treats least privilege as optional overhead or who has never handled transactional consistency under load introduces structural risk.

That risk may stay invisible for months. It tends to surface during peak volume, regulatory review, or an attempted breach. By then, the cost of remediation grows steeply.

For teams scaling quickly, the hiring bar needs to reflect those stakes. Whether you are onboarding full-time staff or nearshore engineers, screening should evaluate more than coding fluency.

Experience in regulated domains, familiarity with secure coding frameworks, and comfort operating distributed systems under scrutiny all matter.

Building the Culture That Sustains Technical Resilience

Building cultural habits that reinforce technical discipline is the best way to build a culture of stability. Even the best architecture erodes if delivery pressure consistently overrides safeguards.

Blameless incident response

When outages or vulnerabilities surface, teams that focus on root causes rather than individual faults learn faster.

Engineers become more willing to report near misses and expose fragile integrations. Over time, that transparency reduces repeat failures.

Measurement

Recovery time objectives, mean time to detection, incident frequency, and deployment cadence provide tangible signals of system health.

SLI and SLO frameworks translate reliability into business impact, which helps non-technical leaders understand why resilience investment matters. When metrics remain visible, tradeoffs become explicit rather than accidental.

Shared ownership

Platform teams, product managers, security leads, and operations engineers all influence system behavior.

Clear application ownership, with defined performance and reliability expectations, prevents diffusion of responsibility.

External partners should also meet defined resilience standards. A platform rarely fails in isolation. It fails through interconnected dependencies.

Conclusion: Resilient FinTech Engineering in Practice

FinTech platforms launching today face increasing load, deeper regulatory scrutiny, and more sophisticated adversaries over time. Early architectural shortcuts often resurface years later under far less forgiving conditions.

Security by design, API first integration, uncompromising data consistency, full-stack observability, architecture built to handle failure, systematic secure coding, and automated resilience validation together create a reinforcing system.

Remove one element, and the overall structure weakens.

Resilient financial systems do not emerge by accident. They result from engineers who understand what is at stake when money, identity, and regulatory obligations intersect. If you need senior fintech engineers to help you build things right the first time, we can assist.

Frequently Asked Questions

What are the core principles of resilient fintech software engineering?

The core principles of resilient fintech software engineering vary depending on who you speak to, but include security-first architecture, API first integration, strict transactional consistency, full-stack observability, fault-tolerant design, secure coding enforcement, and automated resilience validation.

Why is data consistency more critical in fintech than in other industries?

Data consistency in fintech matters because even small transactional discrepancies can trigger regulatory violations, reconciliation failures, and direct financial loss.

What does designing for failure mean in financial systems?

Designing for failure means creating a bunch of redundant systems so that if one fails, there is another in place to do the same thing. Other techniques you may want to consider when designing for failure include failover testing and graceful degradation rather than assuming components will always remain available.

How do fintech teams move from reactive monitoring to proactive observability?

Fintech teams achieve proactive observability by implementing structured logging, correlation IDs, anomaly detection, and SLI/SLO frameworks that surface early warning signals before customers notice issues.

What is resilience maturity in a fintech organization?

Resilience maturity describes how systematically a fintech embeds redundancy, monitoring, failover testing, and automated recovery into its architecture rather than relying on manual intervention.

How should fintech companies evaluate engineering talent for resilience?

Fintech companies should evaluate engineering talent based on experience with secure coding standards, distributed transactions, environment separation, compliance-aware architecture, and production-grade monitoring.

Why is automation essential for fintech resilience?

Automation in fintech resilience reduces human error by embedding security scanning, dependency checks, infrastructure as code, and failover validation directly into the CI/CD pipeline.

What role does API first design play in fintech scalability?

API first design enables scalable fintech integration by enforcing versioning, governance, and interoperability standards that prevent fragile, tightly coupled system dependencies.