Fintech System Architecture Guide: 6 Decisions That Determine Whether Your Platform Scales

Contents

Share this article

Key Takeaways

- Service boundaries in fintech architecture map to regulatory domains. When a service spans multiple regulatory domains, that creates a compliance accountability gap.

- Two-phase commit fails in high-throughput financial systems because long-lived cross-service locks degrade availability under load. The Saga pattern replaces it with local transactions and compensating logic.

- Event-driven communication via Kafka requires schema versioning enforced by a schema registry.

- Fintech observability is made up of three layers, including operational metrics, business event tracing, and an immutable audit log. The first two answer operational questions while the third answers legal and regulatory ones.

- Resilience patterns in fintech must validate idempotency keys before retrying any state-changing operation to prevent issues like a duplicate charge.

If you aren’t careful, the architecture decisions made in the first six months start producing compliance gaps, reconciliation drift, and availability problems. These issues tend to show up once you start scaling, making them incredibly expensive to address.

The solution is a fintech system architecture that applies standard distributed systems patterns.

Service boundaries follow regulatory domains. Cross-service consistency uses Saga instead of distributed 2PC, and event-driven communication requires schema versioning and exactly-once financial semantics.

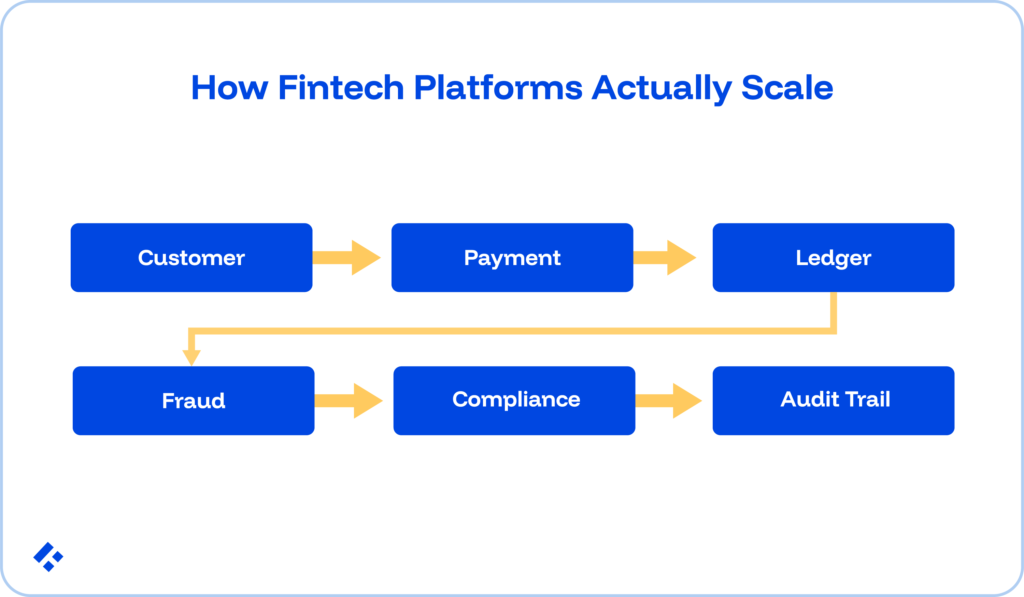

Let’s look at six fintech system architecture decisions that determine whether your platform scales:

- Service Boundaries

- Cross-Service Consistency

- Inter-Service Communication

- Data Storage

- Observability

- Resilience

We’ll cover what you should consider when making your decision, what the common mistake looks like in production, and what the fintech-correct pattern actually requires.

At Trio, we keep pre-vetted fintech specialists on hand at all times who can help you make these decisions and build an application that holds up long-term, as your financial applications scale.

Decision 1: Service Boundaries

In general, service boundaries form around technical concerns. You’ll have authentication, user profiles, API layers, and more, all separate from the processing layer.

Domain-Driven Design improves on this since you draw boundaries around business capabilities rather than technical layers. In fintech, DDD also considers things like regulatory accountability.

Multiple regulatory frameworks govern different fintech activities simultaneously.

For example, a payment service operates under payment network rules (Visa/Mastercard operating rules, NACHA rules) and may require a separate money transmitter license in certain jurisdictions, while a KYC/AML service operates under BSA, AMLD6, or FCA regulations.

Lending services, on the other hand, fall under TILA, ECOA, and CFPB rules, while fraud detection services operate under card network chargeback dispute rules.

These frameworks are reviewed by specific regulators and follow specific audit timelines, which means it’s ideal to separate them from your architectural boundaries.

When your payment service contains fraud logic, when lending code embeds KYC checks, you've created a compliance accountability gap, as it’s no longer clear which team answers for issues.

To prevent this, each bounded context should correspond to a single regulatory domain, owned by a single team, and auditable as a standalone unit.

The most common trap we see our clients falling into is distributed monoliths.

In these cases, services are technically separate (different deployments, different repositories) but tightly coupled at the data and logic level. The system ultimately behaves like a monolith, since changes in one require changes in all, and failures cascade across every service in the chain.

Decision 2: Cross-Service Consistency

When a financial operation spans multiple services, you face a distributed transaction.

The standard computer science solution runs on two-phase commit (2PC), in which all participants lock their resources, the coordinator confirms all are ready, and then all commit simultaneously.

2PC fails in fintech for two specific reasons.

First, it requires all participating services to hold locks for the full coordination round.

A high-throughput payment system processing thousands of transactions per second creates lock contention on financial accounts, which means that other transactions can't read or update the same accounts while those locks are held.

Second, 2PC assumes all participating services remain reachable. When a participant fails mid-coordination, the coordinator must block until recovery or make a heuristic decision about committing.

The Saga pattern

A Saga decomposes the distributed transaction into a sequence of local transactions, each completing independently and publishing an event that triggers the next step. If a step fails, the Saga executes compensating transactions.

These are actions that work to reverse the preceding steps.

In a typical payment system, the payment service creates a payment record and debits a hold, publishing PaymentHoldCreated. The ledger service then posts a pending debit entry, publishing LedgerDebitPosted.

Finally, the fraud service evaluates the transaction, publishing either FraudEvaluationPassed or FraudEvaluationFailed.

If this evaluation passes, then the payment captures and the ledger posts the settled entry.

However, if it fails, then the Saga pattern kicks in, compensating transactions that reverse the hold and the pending debit without requiring cross-service coordination.

Each step remains atomic locally. And, instead of a system-wide coordination attempt, failure at any step triggers specific compensating logic.

Orchestration versus choreography

A Saga can run as orchestrated or choreographed.

Orchestrated Sagas happen when a central orchestrator sends commands to each participant, and are easier to trace and debug since the orchestrator holds the entire transaction logic and its current state.

Choreographed Sagas, where each service reacts to what is published by the previous step, reduce coupling but make failure diagnosis harder.

Orchestration’s auditability tends to make it the more practical choice for financial applications.

Decision 3: Inter-Service Communication

Synchronous service-to-service communication creates temporal coupling. Service A processes a request only when Service B is available.

In a payment system where the ledger service is a dependency of the payment service, a ledger outage stops payment processing.

Fintech availability requirements typically run at 99.99% or higher. Temporal coupling through synchronous calls is not an option.

Kafka as the financial event spine

Apache Kafka has become the dominant event streaming platform for fintech architectures because it provides the specific properties financial systems require.

These properties include durability (events persist and can replay), at-least-once delivery with idempotent consumers for exactly-once financial effects, partition-based ordering for per-account event sequencing, and replay capability for audit and disaster recovery scenarios.

It is exactly for these reasons that we often recommend Kafka when building a financial event spine.

Every state change in every domain service publishes an event to a topic. Services that care about that change subscribe and process asynchronously, independently of each other.

Schema versioning as a non-negotiable requirement

Financial events carry long operational lifetimes. A PaymentCaptured event published today may need to be replayed during a disaster recovery exercise 18 months from now.

The schema of that event must remain stable across that period. A schema registry like Confluent Schema Registry or AWS Glue Schema Registry enforces these contracts across services and catches breaking changes before they reach production.

Exactly-once financial semantics via the outbox pattern

Kafka's at-least-once delivery means consumers may process the same event more than once.

For financial operations like ledger postings and reconciliation records, they are at risk of incorrect results if something is processed twice.

The outbox pattern closes this.

When a service writes a state change to its local database, it atomically writes the corresponding event to an outbox table in the same database transaction. A separate outbox processor publishes from the outbox to Kafka.

At-least-once Kafka delivery gets handled by idempotent consumers that check event IDs before processing, making the end-to-end effect exactly once, even though the delivery mechanism isn't.

Decision 4: Data Storage

Using a single database type for all financial data tends to be both a performance mistake and a compliance mistake.

Different financial data carries different access patterns, consistency requirements, and compliance constraints, and the storage technology that serves each type well tends to be different.

The polyglot persistence model for fintech.

- Transactional database (PostgreSQL): the canonical store for financial records like ledger entries, payment records, and account states.

- Read-optimized cache (Redis): hot balances, session tokens, idempotency key lookups.

- Document store (MongoDB): flexible schemas for KYC/AML case management, fraud investigation records, and compliance event logs where data structure evolves alongside regulatory requirements.

- Analytical warehouse (Snowflake, BigQuery, Databricks): aggregate analytics, regulatory reporting (CCAR, DFAST, BCBS 239 data lineage), ML feature stores for fraud detection models.

Data residency as an architectural constraint

For fintechs operating across jurisdictions, customer financial data may be legally required to remain within the customer's home jurisdiction.

This means you will need region-specific database instances with a routing layer that directs transactions to the appropriate regional database based on customer residency. Analytical aggregation across regions uses non-PII data only.

However, a multi-region architecture can't be retrofitted onto a system designed as a single-region. The data residency routing layer needs to exist from the first deployment, not added after the first cross-border regulatory inquiry.

Decision 5: Observability

In a general SaaS application, observability answers operational questions: is the service up, what is its latency, what is the error rate?

In a fintech system, observability must also answer a variety of other questions, like account balances and the events leading up to an error.

These are audit and regulatory questions. Standard observability tools don't answer them. A three-layer architecture does.

- Operational observability: Prometheus metrics, Grafana dashboards, ELK stack, or equivalent. Answers latency, error rate, throughput, and infrastructure health. Necessary, but insufficient for fintech.

- Business event tracing: Every significant financial event gets recorded with a correlation ID, timestamp, user identity, service version, and causal event. This creates a traceable chain from user action to financial outcome. Distributed tracing (Jaeger, Zipkin, AWS X-Ray) connects service-level spans into end-to-end traces.

- Immutable audit log: Every write to a financial record gets recorded without the possibility of modification. Role-based access controls prohibit DELETE or UPDATE operations on this log at the database level.

Decision 6: Resilience

Every distributed system needs resilience patterns like circuit breakers to stop calling failing services, bulkheads to isolate failure domains, and retry logic to recover from transient errors.

Each of these patterns carries a constraint that is specific to fintech architecture.

In a general microservices architecture, the worst outcome of retrying a failed HTTP request tends to be a duplicate database record.

In a financial system, a retry that isn't idempotency-safe charges a customer twice, posts a duplicate ledger entry, or submits a duplicate ACH transaction.

A circuit breaker opens after N consecutive failures and stops routing requests to the failing service for a cooldown period.

In fintech, it needs to be configured to account for the difference between idempotent operations and non-idempotent operations.

Circuit breaker recovery for non-idempotent operations includes verifying the state of in-flight operations before re-routing.

A bulkhead isolates failure in one service from propagating to others. These play a major role in regulatory isolation. The payment processing thread pool is isolated from the KYC thread pool so that a KYC service outage does not occur.

Exponential backoff with jitter is also a great way to prevent retry storms.

Every retry of a state-changing operation must first check whether the operation has already succeeded. The idempotency key lookup happens before the retry, not after.

Architecture Decision Record: The Quick Reference

Each of the six decisions above has a fintech-specific correct answer that differs from the general software engineering default.

| Architectural Decision | Common Mistake | Fintech-Correct Pattern |

| Service boundary definition | Draw by technical layer (API, logic, DB) | Draw by regulatory domain; each bounded context maps to a single regulatory owner |

| Cross-service data consistency | Use distributed 2PC for multi-service financial transactions | Use Saga pattern; local transactions with compensating logic; no long-lived cross-service locks |

| Inter-service communication | Synchronous REST calls between financial services | Event-driven via Kafka; outbox pattern for exactly-once financial semantics; schema registry for event versioning |

| Financial data storage | Single database for all financial data | Polyglot persistence: PostgreSQL (transactional), Redis (hot balances), warehouse (analytics/reporting); data residency routing per jurisdiction |

| Observability | Operational metrics and error rate dashboards | Three-layer: operational observability + business event tracing + immutable audit log with correlation IDs and service version |

| Resilience | Generic retry logic with exponential backoff | Idempotency-key validation before every state-changing retry; bulkheads aligned with regulatory isolation boundaries; circuit breaker differentiation between idempotent and non-idempotent operations |

The Modular Monolith as a Valid Starting Point

The six decisions above help you build a production-scale fintech system, but there are other considerations you need to make for a pre-launch fintech MVP.

A modular monolith is a single deployable unit organised into well-defined internal modules, each corresponding to a bounded context. This is a more realistic option for early-stage fintech builds.

It provides the domain boundary discipline that microservices require without the operational overhead of distributed deployment. ACID transactions work across domain boundaries without Saga complexity.

Observability also stays a lot simpler since you deal with one application, one log stream, and one trace. Changing a payment flow that touches the ledger module doesn't require deploying two separate services.

However, if you decide to go this way, your developers need to be very diligent when it comes to defining the internal module boundaries as if they were service boundaries from the start.

They need to apply the same regulatory domain logic, the same clean interface contracts between modules, and the same prohibition on direct cross-module database access.

The Technology Stack: Reference Implementation per Layer

Each architectural decision maps to specific technology choices the fintech engineering community has converged on over the past several years.

- Service decomposition and inter-service contracts: OpenAPI or gRPC for synchronous contracts. Avro or Protobuf with Confluent Schema Registry for event contracts.

- Saga orchestration: Temporal for durable workflow orchestration, which handles Saga coordination with built-in retry, compensation, and state persistence. AWS Step Functions for cloud-native deployments.

- Event streaming: Apache Kafka (Confluent Cloud or AWS MSK for managed). Kafka Streams or Apache Flink for stateful stream processing.

- Transactional database: PostgreSQL as the canonical choice for ACID financial records. CockroachDB or Google Spanner for multi-region deployments requiring distributed transactional consistency.

- Caching layer: Redis (Elasticache or Redis Cloud) for hot balance caching, idempotency key lookups, and rate limiting at the API gateway layer.

- Observability stack: OpenTelemetry for distributed tracing instrumentation. Jaeger or Datadog APM for trace visualisation. Prometheus and Grafana for metrics. A custom audit log service backed by append-only PostgreSQL tables.

- Container orchestration: Kubernetes (EKS, GKE, or AKS). A service mesh provides mTLS between services, traffic management, and observability at the network layer.

Who Architects Fintech Systems

A fintech architect needs to know not only basic Saga systems, but also which compensating transactions to implement when a payment Saga fails at the fraud evaluation step, how that compensating transaction interacts with the pending ledger entry, what the customer notification requirements are during the compensation window, and whether the specific regulatory framework governing the payment type requires disclosure of the temporary hold.

In other words, they need to have accumulated judgment by having built these systems and having seen them fail in specific ways.

At Trio, we place engineers who have made these architectural decisions in production financial systems across payments, KYC/AML, ledger infrastructure, fraud detection, and open banking. Our skilled professionals pre-vet them for domain competencies.

For teams that need specific engineering roles within a fintech architecture build, hiring fintech developers or building a dedicated fintech engineering team are both available starting points, depending on the stage and scope of the build.

Request a consult.

Frequently Asked Questions

Domain-driven design matters specifically for fintech architecture because service boundaries determine regulatory accountability. Each bounded context should correspond to a single regulatory domain (payments, KYC/AML, lending, fraud detection) owned by a single team and auditable as a standalone unit.

The Saga pattern decomposes a distributed financial transaction into a sequence of local transactions, each completing independently with compensating logic if a subsequent step fails. Two-phase commit requires all participating services to hold locks simultaneously, which degrades availability in high-throughput financial systems where lock contention on accounts is a real and measurable performance problem.

A modular monolith is a single deployable application organised into well-defined internal modules, each corresponding to a bounded context (payments, KYC, ledger). Early-stage fintechs building toward product-market fit generally benefit from starting here.

Standard application monitoring answers operational questions regarding latency, error rate, throughput, and infrastructure health. Fintech observability must additionally answer legal and regulatory questions, which require an immutable audit log.

The core difference between resilience engineering in fintech compared to general software is that, in general software, a retry of a failed operation produces a duplicate record at worst. In fintech, a retry of an insufficiently idempotent operation produces a duplicate charge, a duplicate ledger posting, or a duplicate ACH submission.

Expertise

- JavaScript

- NGX

- HTML

- Node.js

- Vue.js

Subscribe to our newsletter

Related

Content

Continue Reading